You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

def delete_cells_by_point_index(self, indices):

"""

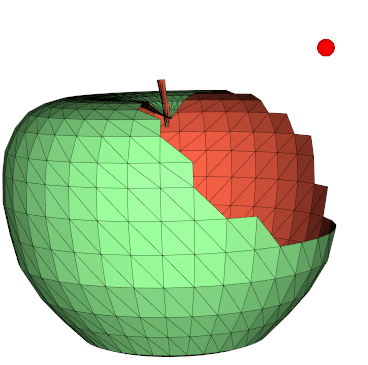

Delete a list of vertices identified by any of their vertex index.

See also `delete_cells()`.

Examples:

- [delete_mesh_pts.py](https://github.com/marcomusy/vedo/tree/master/examples/basic/delete_mesh_pts.py)

"""

cell_ids = vtki.vtkIdList()

self.dataset.BuildLinks()

n = 0

for i in np.unique(indices):

self.dataset.GetPointCells(i, cell_ids)

for j in range(cell_ids.GetNumberOfIds()):

self.dataset.DeleteCell(cell_ids.GetId(j)) # flag cell

n += 1

self.dataset.RemoveDeletedCells()

self.dataset.Modified()

self.pipeline = OperationNode("delete_cells_by_point_index", parents=[self])

return self

Are there any issues with parallelising these two for loops? Even if it's via setting the number of jobs with joblib? It doesn't scale well (e.g. deleting half of a 150,000 point mesh). I'm not sure how VTK datasets work under the hood.

The text was updated successfully, but these errors were encountered:

I think that should be possible: the DeleteCell() call only flags a cell for deletion and the actual work is done by RemoveDeletedCells(). But I dont know which one is the bottleneck.

Current code:

Are there any issues with parallelising these two for loops? Even if it's via setting the number of jobs with joblib? It doesn't scale well (e.g. deleting half of a 150,000 point mesh). I'm not sure how VTK datasets work under the hood.

The text was updated successfully, but these errors were encountered: